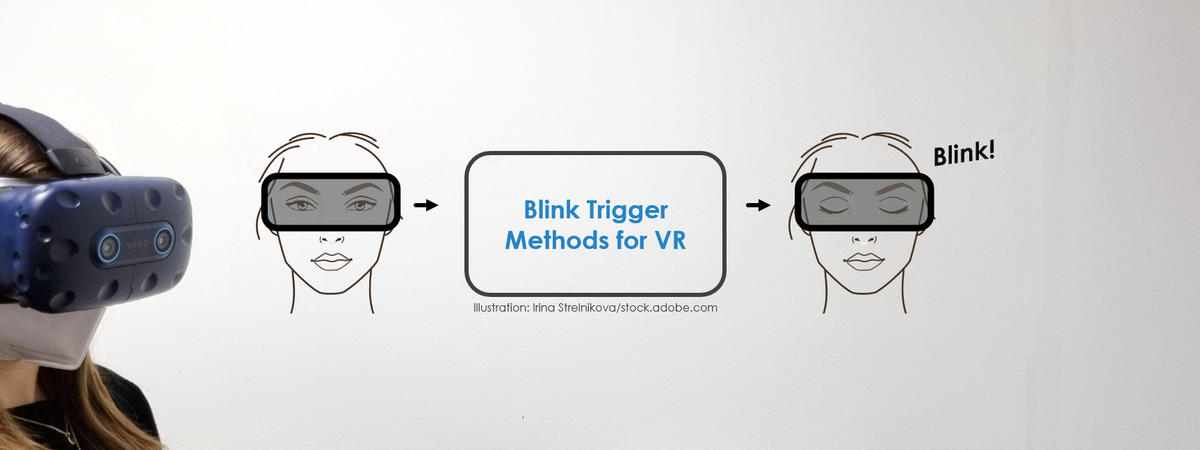

Induce a Blink of the Eye: Evaluating Techniques for Triggering Eye Blinks in Virtual Reality

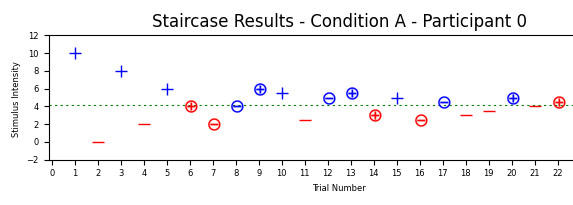

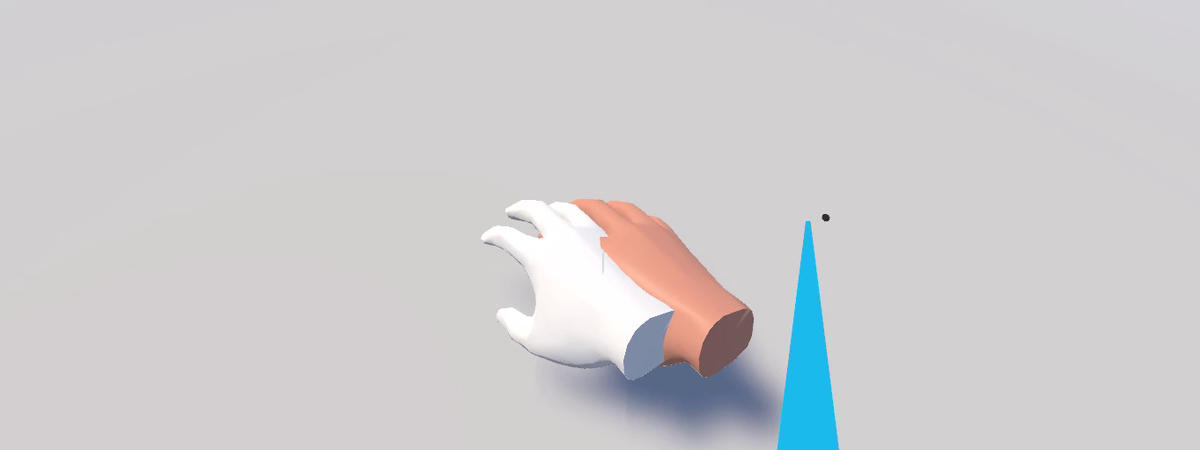

As more and more virtual reality (VR) headsets support eye tracking, recent techniques started to use eye blinks to induce unnoticeable manipulations to the virtual environment, e.g., to redirect users' actions. However, to exploit their full potential, more control over users' blinking behavior in VR is required. To this end, we propose a set of reflex-based blink triggers that are suited specifically for VR. In accordance with blink-based techniques for redirection, we formulate (i) effectiveness, (ii) efficiency, (iii) reliability, and (iv) unobtrusiveness as central requirements for successful triggers. We implement the soft- and hardware-based methods and compare the four most promising approaches in a user study. Our results highlight the pros and cons of the tested triggers, and show those based on the menace, corneal, and dazzle reflexes to perform best. From these results, we derive recommendations that help choosing suitable blink triggers for VR applications.